Stream processing for the next generation of apps

Tuesday, September 1, 2020

|

Richard Harris |

Successful businesses need to make quick decisions from insights gleaned in real time from their data. Computing prices for goods sold online, getting a rideshare car to a passenger, predicting traffic flow in a smart city and dynamically controlling networks to best serve customers - all depend on quickly analyzing and making sense of streaming data. With the global amount of data expected to reach 175 Zettabytes in the next five years, making sense of stored information is clearly a challenge. We caught up with the Simon Crosby, CTO of Swim, to talk about the chalenges of the next generation of apps and how things like Stream processing and continuous intelligence can help.

Amazon Alexa can easily play a requested song or tell you the weather, but no one expects her to analyze road and vehicle conditions when providing travel guidance. The reason we expect so little from apps like Alexa (or Siri or Google Home) is because these virtual assistants still use traditional methods of machine learning to respond to simple queries, rather than accounting for contextual information like human brains do.

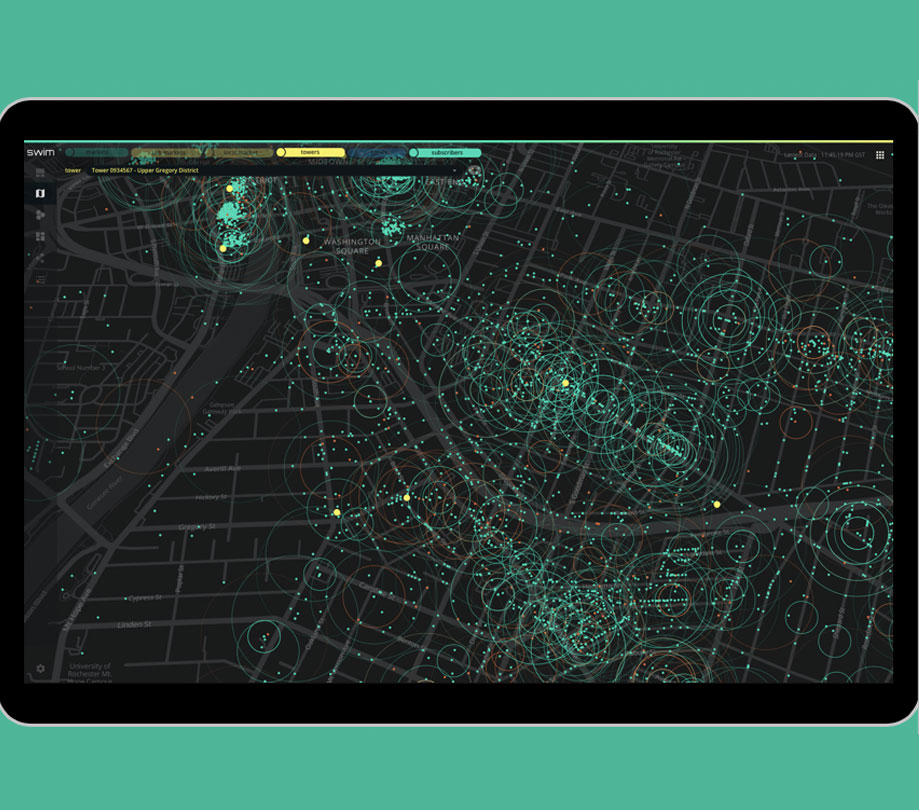

In the enterprise, this type of data analysis can’t support business decisions because the demands of dynamic companies for continuous intelligence derived from streaming data sources is too great. Swim, a company building contextual analytics platforms for streaming data, is helping developers build applications specifically for this type of environment, where analytics can be done in real-time, at the source.

We spoke with Simon Crosby, CTO of Swim, about the things to keep in mind when building the next generation of enterprise apps. Simon discusses continuous intelligence and how app developers can best take advantage of streams of data from endpoint devices and sensors in real-time.

ADM: The global amount of data around the world is expected to reach 175 Zettabytes in the next five years, making sense of stored information is clearly a challenge. What are the key differences between continuous intelligence apps and, say, “cloud-native” stacks?

Crosby: Whereas cloud-native stacks build on a powerful triumvirate: Stateless APIs, microservices, and stateful databases, continuous intelligence needs different abstractions:

- “Things” are stateful: The stateless model serves cloud services well because it lets any server process an event, but this is a drawback when processing streaming data: For each new event, an app must load (code and) the previous state from a database, compute a new value, and store the new state back in the database - a million times slower than stateful computing.

- Algorithms must be adapted for boundless data: Algorithms that analyze, learn or predict must be adapted to deal with never-ending data – computing on each new event.

- Context is vital: Events aren’t meaningful in isolation. Real-world relatedness of things such as containment, proximity and adjacency are key in the real world.

ADM: Databases have become much smarter - particularly cloud database services. Are they still good sources of app intelligence?

Crosby: There are hundreds of powerful databases to choose from, but there’s a limit to how much “intelligence” one can expect of a database engine. Databases don’t run applications and can’t make sense of data. And they are typically a slow network hop away from application logic. The trend is toward in-memory stores, grids and caches. But -

- No database, in-memory or other, can understand the meaning of data, drive computation, or deliver real-time responses

- A single event may cause cascading state changes to many related entities, each requiring an evaluation of logical or mathematical predicates, joins, maps, or other aggregations, and execution of business logic that requires thousands of database round trips

- The database as a repository of ‘truth’ needs to be re-examined. Often it is difficult to determine the true state of a source, and a point-in-time value is unlikely to be useful:

- Distributions, trends, or statistics computed over time may be more useful

- ML, regression, and other predictive tools use past behavior to predict the future

- Often data values are themselves estimates

- Correlations and other mathematical relationships between data sources are vital for deep insights and can often only be found in the time domain

ADM: Where does event stream processing fit in. How can a Kafka app developer, for example, accelerate their path to continuous intelligence?

Crosby: Apache Kafka is a popular event streaming platform that performs a key role: It enables any number of sources to publish events about topics to a broker, and any number of applications to subscribe to topics. But brokers don’t execute applications - they act as a buffer. Instead, an event stream processor analyzes the stream: Ultimately the reasoning about the meaning of an event or set of events requires a stateful system model, and insights that rely on logical or mathematical relationships between data sources and their states, are not the domain of the broker.

ADM: There are a number of popular streaming analytics products and even open source projects available. What’s the difference?

Crosby: Best known for delivering widget views and KPIs, streaming analytics takes an approach based on regular computation of insights and delivery of the results to UIs used to understand the system at a “summary” level. A large number of tools exist in this category, both general-purpose and vertically integrated.

Managers of large systems - whether containers in the cloud or fleets of trucks, need detailed performance metrics specific to their use-cases. They are operations- and outcome-focused - they want to “zoom in” on issues to see problems - often in real-time. The meaning of data is critical so in streaming analytics the meaning of an event is always well defined.

Managers aren’t the only users though: Modern SaaS applications need to deliver contextually useful responses to end-users. Timely, granular, personalized and local responses are crucial, even for applications at massive scale. This kind of capability is beyond the scope of streaming analytics applications.

Streaming analytics applications use a defined schema or model, combined with business logic to translate event data into state changes in the system model. The meaning of state changes triggered by events is the “business logic” part of an application and has a specific interpretation given the use case. For this reason streaming analytics applications are often domain specific (for example in application performance management), or designed for a business specific purpose.

ADM: So what is different about continuous intelligence?

Crosby: Applications that demand continuous intelligence at scale are characterized by a need to understand streaming data in context, and respond — in real-time, and at scale. They fuse streaming and traditional data, analyzing, learning, and predicting on-the-fly in response to streaming data from many sources. They

- Always have the current answer, not the results of the last batch run. Algorithms need to be adapted to analyze, estimate, anticipate and predict continuously — computation flow is driven by data.

- Continuously analyze new data in context. Because a single event can cause many database round-trips, the only way to analyze, learn and predict in real-time is to use stateful, in-memory processing. Analysis has to be continuous because data streams are boundless.

- Analyze and visualize in context: An event is relevant in the context in which it was generated, so dynamic inter-relationships between data sources are crucial. Real-world relatedness is vital for applications that reason about collective meaning.

- Analysis uses the continuous, concurrent state changes of data sources — not their raw data. Fluid real-world relationships must be continuously re-evaluated, and apps must respond immediately, in context, and in real-time.

The flow of continuous intelligence and automatic responses has to be computed and responses delivered concurrently. Tens or hundreds of millions of complex evaluations may need to be executed at the same time — for each “thing” and all of its current relationships.

Finally, the results of analysis, learning and prediction must be visually available at all levels of detail. A KPI that shows aggregate information, such as all traffic lights in a city, should update in real-time to reflect what’s happening on the ground. Real-time visualization is a critical aspect of continuous intelligence.

Simon Crosby

Simon Crosby is CTO at Swim. Swim offers the first open core, enterprise-grade platform for continuous intelligence at scale, providing businesses with complete situational awareness and operational decision support at every moment. Simon co-founded Bromium (now HP SureClick) in 2010 and currently serves as a strategic advisor. Previously, he was the CTO of the Data Center and Cloud Division at Citrix Systems; founder, CTO, and vice president of strategy and corporate development at XenSource; and a principal engineer at Intel, as well as a faculty member at Cambridge University, where he led the research on network performance and control and multimedia operating systems.

Read more: https://www.swim.ai

Become a subscriber of App Developer Magazine for just $5.99 a month and take advantage of all these perks.

MEMBERS GET ACCESS TO

- - Exclusive content from leaders in the industry

- - Q&A articles from industry leaders

- - Tips and tricks from the most successful developers weekly

- - Monthly issues, including all 90+ back-issues since 2012

- - Event discounts and early-bird signups

- - Gain insight from top achievers in the app store

- - Learn what tools to use, what SDK's to use, and more

Subscribe here